Hot Take on how GPT-5.2 compares in document summarization

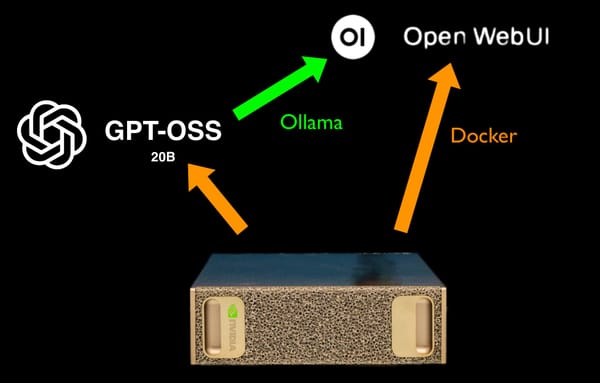

Open AI's GPT-5.2 dropped, and is available on my accounts as of this morning. Wanted to share my initial hot-take how it performs in business applications compared to older models like 5.1, 4.1, and Llama-3.1 as well as gpt-oss-20b.